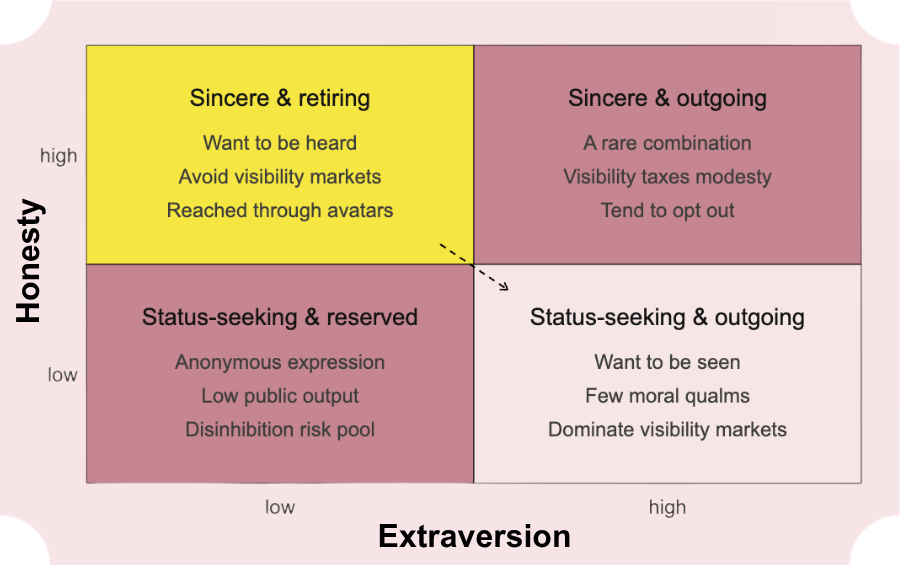

The New Yorker is worried about A.I. social media avatars – so worried the editors just showed their hand with the very rare, very un-Eustace Tilly screamer of a search headline: “A.I. Is Making Influencing Even Faker.” Regrettable wording aside (let's not assign A.I. agency just yet), that hed presents a false premise: Influencing could get faker. Nope. Per a 2016 study [1], low scores on personality measures of sincerity are highly predictive of selfie posting. Per a 2011 Ohio State study, self-promotional behavior is positively correlated with academic cheating. In other words, the personality market driving the influencer economy already selects for indifference to the truth. The fakeness is human. Blaming avatars for fakeness is like blaming ozempic for vanity.

In fact, as Vogue recently noted, avatars may create more truth-seeking influencers. If sincerity is predictive of not taking selfies and avatars eliminate the need for selfies, more sincere people can influence. There’s precedent. Consider NoFilterPhilosophy, an Insta account with over 600K followers featuring a cartoon dog opining on Wittgenstein. The videos are produced with Adobe Character Animator using a 2D puppet rig. That’s a real, albeit surmountable, barrier to entry for the faceless creator. As A.I. brings that barrier down, more sincere and well-read thinkers will be able to script characters in the feed without succumbing to the pressures of its current personality market. Will Pynchon become a VTuber? No. But he could.

In 1993, Peter Steiner drew a New Yorker cartoon of two dogs at a computer. One says to the other: "On the Internet, nobody knows you're a dog." That’s the old paradigm. Going forward, the humans worth following may be passing as dogs online.